May 19, 2026

AI-Assisted Code Review in the Theia IDE

by Jonas, Maximilian & Philip at May 19, 2026 12:00 AM

With AI coding agents, developers are producing more code than ever, and code reviews are turning into the bottleneck. The obvious next step is to let AI do the reviews too. Most AI review tools try …

The post AI-Assisted Code Review in the Theia IDE appeared first on EclipseSource.

May 18, 2026

Frontier AI and the next phase of software vulnerability defence

by Mike Milinkovich at May 18, 2026 07:00 AM

As advanced AI lowers the cost of discovering and exploiting software vulnerabilities, Europe must treat open source security and rapid patch deployment as critical resilience infrastructure

Context. Frontier AI systems have crossed an important threshold for cybersecurity and software resilience. They are no longer limited to code completion, triage, or report writing; the most capable models can now assist with vulnerability discovery, exploitability analysis, and, increasingly, patch generation. Anthropic’s Project Glasswing, launched on April 7, 2026, put this shift in the public eye by giving selected critical software operators and maintainers early access to Claude Mythos Preview for defensive security work. Anthropic described Glasswing as an initiative to secure critical software with early access to frontier AI, involving major infrastructure, cloud, financial, and open source actors, and extending access to more than 40 organisations that build or maintain critical software infrastructure. Through our longstanding partnership with the Alpha Omega Project, the Eclipse Foundation has been part of the Glasswing Project since its inception, giving us direct experience with this emerging model of AI-assisted vulnerability discovery. To our knowledge, we are currently the only EU-domiciled organisation participating in the initiative, giving us a unique vantage point on how frontier AI capabilities are beginning to reshape software security and resilience.

Why this matters now. The capability jump appears not to be limited to one model, one vendor, or ecosystem. The UK AI Security Institute found that Claude Mythos Preview represented a step-change in cyber performance, including autonomous progress on multi-step attack simulations and high success on expert-level cyber tasks. Less than three weeks later, AISI reported that OpenAI GPT-5.5 reached a similar level of performance on its cyber evaluations and was the second model to complete one of AISI’s multi-step cyber-attack simulations end-to-end. Open-weight models are also narrowing the gap: CAISI’s May 2026 evaluation described DeepSeek V4 Pro as the most capable Chinese model it had evaluated across cyber, software engineering, science, reasoning, and mathematics, while still lagging the leading U.S. frontier by roughly eight months. The implication is that capabilities currently concentrated among a small number of frontier AI providers will quickly become cheaper, more widely available, and harder to govern. This makes early institutional experience with frontier defensive AI workflows strategically important.

The immediate risk: a vulnerability and patching imbalance. Critical infrastructure operators, including energy, transportation, water, health, public services, and financial services, are especially exposed because many run complex, legacy, opaque software estates where patching is slow, cautious, and operationally disruptive. This is not a hypothetical concern: the US Government’s GAO’s 2025 review of critical federal legacy systems found outdated languages, unsupported hardware or software, and known cybersecurity vulnerabilities in systems supporting missions such as critical infrastructure, tax processing, and national security. CISA similarly warns that outdated software is a gateway for threat actors in critical infrastructure contexts, including public-facing routers, VPNs, and firewalls used to reach operational systems. NCSC now expects a “vulnerability patch wave”: a rush of software updates across open source and commercial software stacks as AI accelerates discovery of long-standing technical debt.

The systemic threat. AI-assisted vulnerability discovery changes the economics of offense. It lowers the time, expertise, and cost needed to find flaws, validate exploitability, and turn known-but-unpatched vulnerabilities into working attacks. This is particularly dangerous where many institutions depend on the same software, libraries, cloud providers, identity systems, network appliances, or open source software components. For decades, many IT and operational technology environments have treated patching as an operational disruption to be minimized, especially when systems appear to be running correctly. In some cases, this caution is understandable: outages can have safety, economic, regulatory, and reputational consequences. But the balance of risk is changing. When AI can accelerate vulnerability discovery and exploitation, “stable but unpatched” systems can quickly become systematically exposed. Changing this culture and making rapid, well-tested patch deployment a core resilience function may be one of the hardest short-term challenges. The most serious concern is the possibility that threat actors will use AI to discover and exploit “zero day” vulnerabilities before patches are available. The IMF warned on May 7, 2026 that advanced AI models can reduce the time and cost of identifying and exploiting vulnerabilities, increasing the likelihood of correlated failures across widely used systems; it specifically identified financial intermediation, payments, and confidence as systemic risk channels. The same concern applies to cross-sector dependencies among finance, energy, telecommunications, public services, and digital infrastructure.

The Eclipse Foundation’s key finding. Our most important finding from the one-month experiment with Mythos was that operational workflows, validation pipelines, and human oversight matter at least as much as the model. Glasswing’s real significance is not simply that one frontier model found more vulnerabilities. It is that a community of security researchers can iteratively improve prompts, agentic harnesses, target selection methods, reproduction pipelines, and triage workflows, and then apply those methods across multiple market-available models, including cheaper and more widely accessible ones. Anthropic’s own technical write-up reinforces this point: its vulnerability work used agentic scaffolds, containers, target ranking, repeated runs, and validation methods rather than a bare chatbot prompt. NCSC likewise stresses that practical AI cyber capability comes from AI systems—models combined with tools, workflows, and human oversight—not from raw model capability alone.

The opportunity: shared defence capacity at machine speed. The same tools that raise offensive risk can strengthen defense if deployed first and responsibly. AI can continuously scan code and dependencies, generate fuzzing harnesses, prioritize findings by exploitability and exposure, propose patches, produce regression tests, summarize impact for maintainers, and accelerate coordinated vulnerability disclosure. NCSC identifies three high-value defensive uses: reducing attack surface through AI-enabled testing and hardening, improving detection and investigation, and automating mitigation and response where carefully governed. The strategic objective should be to convert AI-driven discovery into AI-assisted remediation faster than adversaries can convert discovery into exploitation. Organisations that are not using AI to strengthen threat detection, prevention, and mitigation risk being outpaced by AI-enabled attackers.

What Europe should do now. Europe should treat AI-enabled open source security as shared digital resilience infrastructure. That means investing in trusted vulnerability discovery, coordinated disclosure, maintainer support, patch validation, and deployment readiness across the software components that underpin critical services. No single vendor, foundation, institution, or member state can solve this alone; it requires an ecosystem response. Europe should also ensure that trusted public institutions and open source ecosystems remain directly involved in frontier AI cybersecurity evaluation and remediation efforts, rather than relying on commercial actors outside Europe.

Open source is not the problem; it is the solution. The risk does not come from open source. It comes from the fact that many organisations depend on software they do not fully understand, cannot fully inventory, and cannot patch quickly enough. Open source makes that dependency visible, auditable, and repairable. Open source governance structures may become increasingly important in AI-enabled remediation ecosystems because their transparency, global maintainer communities, reproducible builds, public issue tracking, coordinated disclosure practices, and shared security tooling make them uniquely capable of operating at ecosystem scale. CISA’s Open Source Software Security Roadmap explicitly recognizes OSS as a public good supported by diverse communities and calls for supporting critical OSS components relied on by government and critical infrastructure. Furthermore, AI is enabling increasingly sophisticated reverse engineering tools that can generate source code from proprietary binaries, making “security through obfuscation” an implausible strategy.

Conclusion. This is a global software resilience issue, with direct implications for Europe’s security, digital sovereignty, and strategic autonomy. Critical infrastructure and financial services everywhere rely on globally developed open source components, shared platforms, and common supply chains. Local champions will matter, but isolated national responses will not be sufficient. The priority is coordinated action: shared AI-enabled vulnerability discovery, trusted disclosure channels, maintainer support, rapid patch production, dependency intelligence, and deployment capacity across public and private sectors. Ultimately, resilience will depend not only on AI capability itself, but on trusted ecosystems capable of coordinating remediation rapidly across shared infrastructure. The Eclipse Foundation will work with public institutions, industry, and open source communities to help strengthen these shared resilience capabilities across the European software ecosystem. Ultimately, resilience will depend not only on AI capability itself, but on trusted ecosystems capable of coordinating remediation rapidly across shared infrastructure. Open source should be treated not as the weak link, but as the coordination layer through which Europe and its global partners can find, fix, validate, and deploy security improvements at the speed modern resilience now requires.

May 16, 2026

Counting and Collecting Collectors

by Donald Raab at May 16, 2026 06:55 AM

Zero-argument methods on Java Collectors should return static instances.

Photo by Yusuf Onuk on Unsplash

Photo by Yusuf Onuk on UnsplashWhen new gets old

I love Java, but scratch my head sometimes wondering why so much needless garbage is generated. Do you know how many static Collector instances are available in the Collectors static utility class in the JDK? Zero.

Every Collector you create using the Collectors utility class is new. For static methods on Collectors that take no parameters, new gets old quick.

Ask yourself, “How many copies of Collectors.toList() do we need in a JVM?” If you answered one, you are correct.

For anyone not familiar with Collector and Collectors here’s a simple example used with a List in Java to filter even integers into another result List, which is created by Collectors.toList().

@Test

public void collectorsToList()

{

List<Integer> integers = List.of(1, 2, 3, 4, 5);

List<Integer> evens = integers.stream()

.filter(e -> e % 2 == 0)

.collect(Collectors.toList());

assertEquals(List.of(2, 4), evens);

}

If we look at the code for Collectors.toList(), every invocation creates a new CollectorImpl.

The implementation of Collectors.toList() returning a new CollectorImpl instance

The implementation of Collectors.toList() returning a new CollectorImpl instanceNow, interestingly, CollectorImpl was converted to a record class at some point after records were introduced in Java 17. As CollectorImpl is package private this was a “safe” change, but did have a minor behavioral change that I witnessed right before I started writing this blog. CollectorImpl was a static class in Java 17, that did not override the equals and hashCode method. When CollectorImpl became a record, all of a sudden it was quietly provided an equals and hashCode method.

The following test will pass in Java 25. Try and run the same test in Java 17. It should fail on the equals and hashCode assertions.

@Test

public void equalsAndHashCodeForCollectorsToListInJava25()

{

var collector1 = Collectors.toList();

var collector2 = Collectors.toList();

assertNotSame(collector1, collector2);

// CollectorImpl is record

assertEquals(collector1, collector2);

assertEquals(collector1.hashCode(), collector2.hashCode());

}

The assertNotSame shows that Collectors.toList() returns a new Collector instance each time. The fact that collector1 and collector2 are equals now has an interesting side-effect if the instances are put into a Set. This behavior will appear different between Java 17 and Java 21, for those that stick to LTS releases.

@Test

public void equalsAndHashCodeForCollectorsToListInJava17()

{

var collector1 = Collectors.toList();

var collector2 = Collectors.toList();

assertNotSame(collector1, collector2);

// CollectorImpl is a static class without equals and hashCode

assertNotEquals(collector1, collector2);

assertNotEquals(collector1.hashCode(), collector2.hashCode());

}

Notice how collector1 and collector2 are not equal and have different hashCode values.

Collectors are temporary, so how can we see them?

Collector instances are created and then quickly garbage collected after a Stream finishes processing, but we can see them on our heaps if we know when to look, or if we monitor instance creation using a profiling tool. If you’re wondering how many CollectorImpl instances you might be creating every day, I will demonstrate running an analysis on a code base using the popular SonarQube for IDE plugin for IntelliJ and then search for how many CollectorImpl instances we can see created on the heap while the process is running. First, I start IntelliJ with the plugin installed and find the process for the SonarLintServerCli process running locally using jps -l.

Process id 2895 is what we we will look at while an analysis is running

Process id 2895 is what we we will look at while an analysis is runningI kicked off a full analysis of the Eclipse Collections code base, which is large. I thought this would be a good code base to test with and see a lot of temporary objects getting created and garbage collected on because the code base is large. The SonarQube analsysis process runs pretty fast considering the size of the Eclipse Collections code base. It completed in just a few minutes. This was enough time for me to look at some jmap heap histograms while the analysis process was running and find some Stream, Collector, and Lambda instances on the heap as the analysis work was being completed. The command I ran to look at the histogram of the Java heap was as follows.

jmap -histo 2895 | head -n 102 | grep "CollectorImpl\|ReferencePipeline\|Lambda"

I look for CollectorImpl, ReferencePipeline (aka Stream), and Lambda instances in the top 100 memory consumers of of the Java heap. I use a line count of 102 to account for the headings in the jmap output.

I ran this a few times, and saw various numbers of each of these types of objects. The following jmap run had the largest number of CollectorImpl instances, so I decided to share it. I can’t tell you where and why the plugin is creating these. The first number column is the rank of each class on the heap based on memory occupied, from greatest to least. The second number is the number of instances in memory. The third number is the number of bytes of memory the instances of each class are occupying.

CollectorImpl reached number 57 of memory consumers on the heap with 44K instances taking 1.4mb of RAM

CollectorImpl reached number 57 of memory consumers on the heap with 44K instances taking 1.4mb of RAMThere’s no way to tell what type of Collector is being created here, but I would guess that some number of these might be Collectors.toList() or other zero-argument Collector instances.

While I was looking for CollectorImpl, I thought it would be interesting to also look for ReferencePipeline (aka. Stream) on the heap. Showing up in the number three spot at 1.9 million instances taking 111MB is ReferencePipeline$Head. This is the object that gets created by calling collection.stream(). ReferencePipeline$2 is stream().filter(), and ReferencePipeline$3 is stream().map(). These Stream instances all get collected quickly, but this is a large volume of them. I also thought it was interesting to see how many Lambda instances get created. They also get collected quickly but this is a large number of temporary objects.

I guessed that the SonarQube plugin for IntelliJ might be using Stream and Lambdas for in memory Collection processing. This jmap output seems to confirm that. This plugin and a lot of other Java applications and libriaries written over the past twelve years that use Java Stream would probably benefit from zero-argument Collector methods in Collectors returning static instances.

If you want to learn more about jps, jmap and three other Java command line tools that are AI friendly, check out the following blog.

I Used Five Command Line Java Tools This Week and I Feel Good

There can be only one

Eclipse Collections has an equivalent utility class to Collectors named Collectors2. In Eclipse Collections 11.1, which was released in July 2022, the equivalent static method Collectors2.toList() was changed to return a static Collector instance. Several other zero-argument methods were changed to return static instances as well.

The following test will pass with Eclipse Collections 11.1 or above, on all versions of Java back to Java 8.

@Test

public void equalsAndHashCodeForCollectors2ToListInEclipseCollections()

{

var collector1 = Collectors2.toList();

var collector2 = Collectors2.toList();

assertSame(collector1, collector2);

assertEquals(collector1, collector2);

assertEquals(collector1.hashCode(), collector2.hashCode());

}

This behavior is not impacted with CollectorImpl becoming a record, but it is possible that static methods on Collectors2 that take parameters will now return Collector instances that return true for equals if their components are equal.

Comparing JDK new instances vs. EC static instances

The following test compares several equivalent Collector instances from the JDK and Eclipse Collections. The code shows that every call to a static method on Collectors will result in a new instance. The calls to the equivalent static methods on Collectors2 in Eclipse Collections will return a single instance.

import java.util.Set;

import java.util.function.Function;

import java.util.stream.Collector;

import java.util.stream.Collectors;

import org.eclipse.collections.api.block.function.primitive.IntToObjectFunction;

import org.eclipse.collections.api.factory.Lists;

import org.eclipse.collections.api.factory.primitive.ObjectIntMaps;

import org.eclipse.collections.impl.block.factory.HashingStrategies;

import org.eclipse.collections.impl.collector.Collectors2;

import org.eclipse.collections.impl.factory.HashingStrategySets;

import org.eclipse.collections.impl.list.primitive.IntInterval;

import org.junit.jupiter.api.Test;

import static org.junit.jupiter.api.Assertions.assertEquals;

import static org.junit.jupiter.api.Assertions.assertNotSame;

import static org.junit.jupiter.api.Assertions.assertSame;

public class CountingCollectorsTest

{

private static final int COUNT = 1_000;

private static final int JDK_COUNT = 1_000;

public static final int EC_COUNT = 1;

@Test

public void countingUniqueCollectors()

{

var jdkCollectors = ObjectIntMaps.mutable

.<IntToObjectFunction<Collector<?, ?, ?>>>empty()

.withKeyValue(i -> Collectors.toList(), JDK_COUNT)

.withKeyValue(i -> Collectors.toSet(), JDK_COUNT)

// To simulate Bag type in JDK

.withKeyValue(

i -> Collectors.groupingBy(

Function.identity(),

Collectors.counting()), JDK_COUNT)

.withKeyValue(i -> Collectors.toUnmodifiableList(), JDK_COUNT)

.withKeyValue(i -> Collectors.toUnmodifiableSet(), JDK_COUNT)

.withKeyValue(i -> Collectors.joining(), JDK_COUNT)

.toImmutable();

var ecCollectors = ObjectIntMaps.mutable

.<IntToObjectFunction<Collector<?, ?, ?>>>empty()

.withKeyValue(i -> Collectors2.toList(), EC_COUNT)

.withKeyValue(i -> Collectors2.toSet(), EC_COUNT)

.withKeyValue(i -> Collectors2.toBag(), EC_COUNT)

.withKeyValue(i -> Collectors2.toImmutableList(), EC_COUNT)

.withKeyValue(i -> Collectors2.toImmutableSet(), EC_COUNT)

.withKeyValue(i -> Collectors2.toImmutableBag(), EC_COUNT)

.withKeyValue(i -> Collectors2.makeString(), EC_COUNT)

.toImmutable();

var all = Lists.immutable.with(jdkCollectors, ecCollectors);

all.forEach(map ->

map.forEachKeyValue(

CountingCollectorsTest::countUniqueCollectors));

}

private static void countUniqueCollectors(

IntToObjectFunction<Collector<?, ?, ?>> function,

int expectedCount)

{

Set<Collector<?, ?, ?>> identitySet =

HashingStrategySets.mutable.with(

HashingStrategies.identityStrategy());

int uniqueCount = IntInterval.oneTo(COUNT)

.collect(function, identitySet)

.size();

assertEquals(expectedCount, uniqueCount);

}

}

I had to use an identity set to test with here, because a regular Set will use hashCode and equals. Again, this behavior changed between Java 17 and 21, when CollectorImpl was changed into a record. For all of the JDK Collectors, 1,000 instances are created for each Collector type. For Eclipse Collections, one instance is created for each Collector type.

There can be only one.

-Highlander

Saving the planet, one Collector at a time

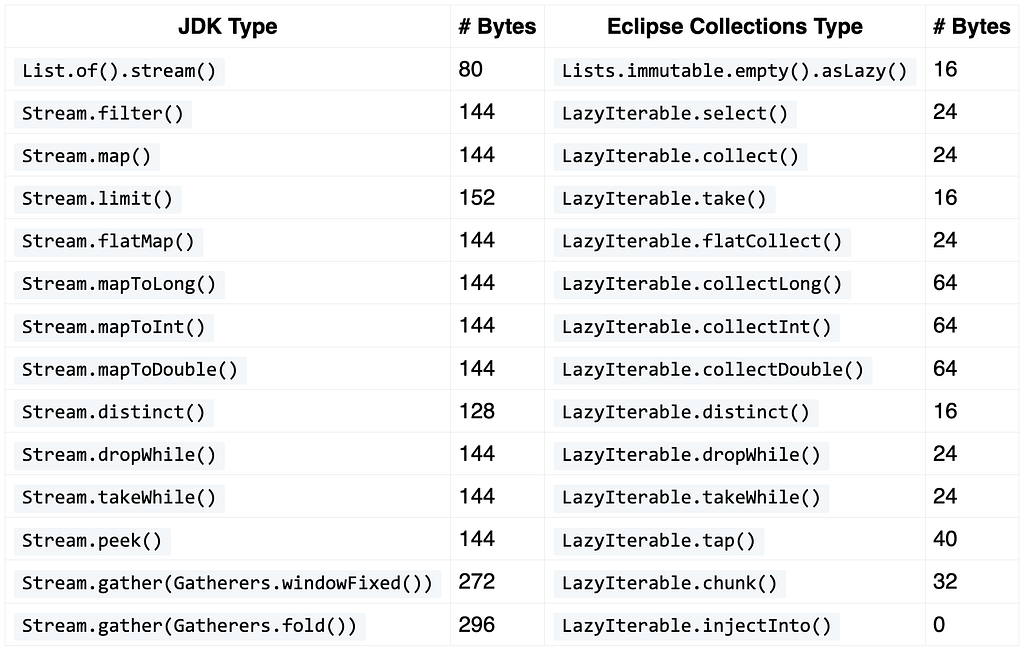

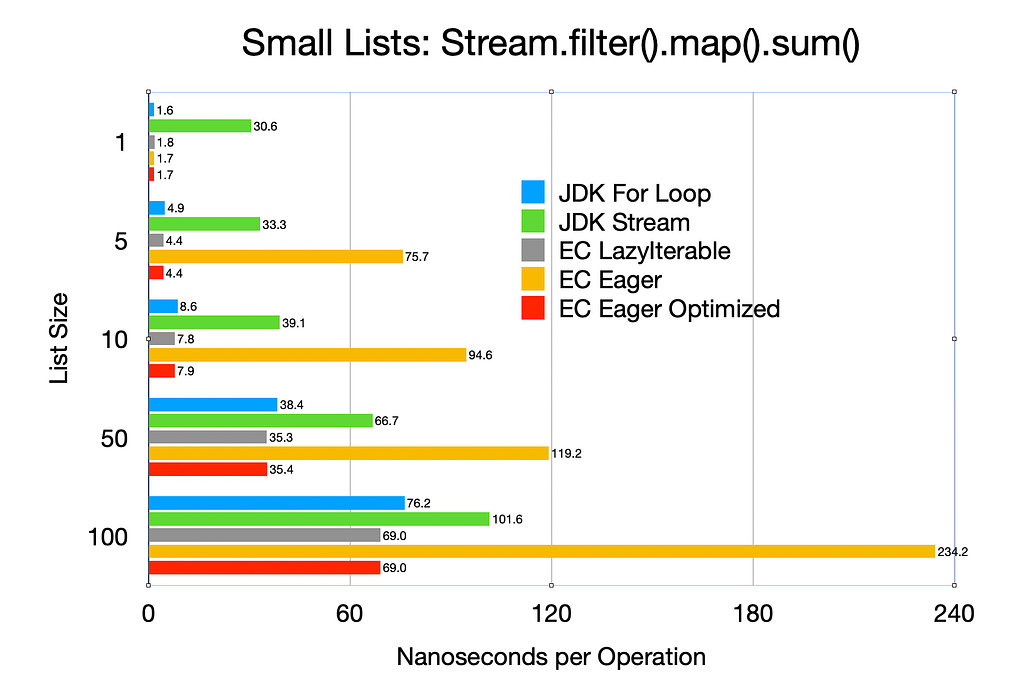

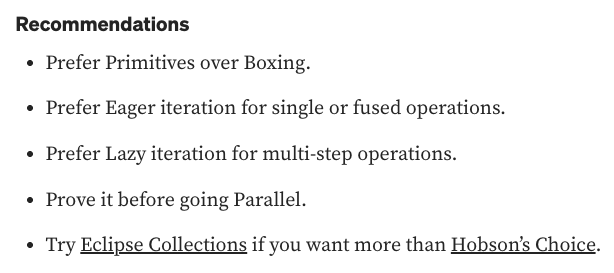

I realized some time around 2022 that Collectors2 could be optimized by returning static instances for zero-argument methods. There are more zero-argument methods on Collectors2 in Eclipse Collections than there are on Collectors in the JDK, so this optimization is extra helpful if you use Eclipse Collections Collector types with Stream. The following Venn diagram shows the number of non-overloaded methods on several types in the JDK and Eclipse Collections with Collectors and Collectors2 over on the right side.

From Appendix A of my book, “Eclipse Collections Categorically”

From Appendix A of my book, “Eclipse Collections Categorically”There are currently fifteen static Collector instances in Collectors2.

I love hidden optimizations like this where we can reduce the need to create new instances of essentially the same things. If you find yourself using Collectors with zero-argument methods a lot, maybe consider using Collectors2 from Eclipse Collections.

We’re always on the lookout for memory savings in Eclipse Collections, and you get a ton of memory savings alternatives already in Eclipse Collections. Using rich APIs directly on Eclipse Collections types, like the extensive converter methods, removes the need to create Collector instances altogether. This might also be a good argument for adding more converter methods directly to Stream like toList(). It is certainly another good reason to use Stream.toList() instead of Collectors.toList().

What if Java had Symmetric Converter Methods on Collection?

It’s possible that a future version of the JDK Collectors utility class could provide optimized zero-argument methods returning static Collector instances. I think this would be a good change to make, as it would silently remove billions of unnecessary Collector instances from being created again. Modern garbage collectors are very fast, but the fastest garbage to collect is the garbage that you didn’t create in the first place. Stop spending time collecting up all those duplicate zero-argument Collectors!

Thanks for reading!

I am the creator of and committer for the Eclipse Collections OSS project, which is managed at the Eclipse Foundation. Eclipse Collections is open for contributions. I am the author of the book, Eclipse Collections Categorically: Level up your programming game.

May 13, 2026

Announcing Eclipse Ditto Release 3.9.0

May 13, 2026 12:00 AM

Eclipse Ditto team is excited to announce the availability of a new minor release, including new features: Ditto 3.9.0.

The focus of this release is on policies, multi-tenancy and reuse. Operating Ditto for several tenants sharing one cluster — or for organizations that want to apply consistent governance and audit rules across many policies — has historically required either duplicating policy entries across every tenant’s policy or maintaining ad-hoc policy templates outside Ditto. Ditto 3.9.0 brings these patterns into the platform itself with three complementary building blocks:

- Namespace-scoped policy entries let a single policy carry entries that only apply to Things in specific namespaces — so a tenant’s policy can be expressed as a small set of namespace-aware rules instead of as separate policies per tenant.

- Namespace root policies let operators configure policies that are transparently merged into every policy of a given namespace, without each policy author having to opt in. This is the natural home for cross-tenant concerns: governance and audit READ access, compliance monitoring, break-glass SRE accounts, or forced minimum logging.

- Entry-level

referencesfor policies and policy imports replace duplicated subject/resource blocks with a uniform mechanism for additively merging from other entries — both inside the same policy and from imported “template” policies. Imported entries can declare exactly what kinds of additions (subjects,resources,namespaces) referencing entries are allowed to contribute viaallowedAdditions, andtransitiveImportsopt selected imports in to multi-level resolution. The new resolved policy view (policy-view=resolved) returns the merged effective policy after imports and namespace-root resolution, which makes debugging effective permissions much easier.

To round off the multi-tenancy story, gateway-level namespace access control restricts which namespaces an authenticated request may touch — for example by matching a JWT claim — so that a token issued for one tenant cannot reach Things in another tenant’s namespace.

Beyond policies, this release also brings a new WoT Discovery “Thing Directory” endpoint, dynamically

scoping a WoT Thing Description to the requesting user’s permissions, partial change notifications

based on Policy READ permissions, the checkPermissions API for all protocols (WebSocket, AMQP, MQTT —

not only HTTP), encryption key rotation for connection secrets, an empty() RQL filter, the

fn:format() placeholder pipeline function, slow search query logging, configurable custom

MongoDB search indexes, per-namespace activity-check configuration, a history exploration mode in

the Explorer UI and quite a number of further enhancements.

On the operations side, Ditto now builds and runs on Java 25, and the bundled Helm chart no longer

ships an ingress-nginx controller — the choice of ingress controller is left to the operator. To reflect

this breaking change, the Helm chart moves to a major version 4.0.0, and from now on the chart

follows its own semantic versioning, decoupled from Ditto’s appVersion. Chart-only changes will

increment the chart version independently of the Ditto release cycle.

Adoption

Companies are willing to show their adoption of Eclipse Ditto publicly: https://iot.eclipse.org/adopters/?#iot.ditto

When you use Eclipse Ditto it would be great to support the project by putting your logo there.

Changelog

The main improvements and additions of Ditto 3.9.0 are:

- Namespace-scoped policy entries to limit a policy entry’s scope to a configured set of Thing namespaces

- Namespace root policies which are transparently merged into all policies of a configured namespace

- Limiting which namespaces are accessible at the gateway level via configurable, placeholder-based rules

- Entry-level

referencesin policies and policy imports, withtransitiveImportsfor selective multi-level resolution andallowedAdditionsto control what may be merged in - Resolved policy view API option returning the merged effective policy after imports and namespace-root resolution

- Partial change notifications based on Policy READ permissions

checkPermissionsAPI for all protocols — previously only HTTP — making permission checks available via WebSocket, AMQP and MQTT- WoT Discovery “Thing Directory” endpoint following the W3C WoT Discovery specification

- Dynamically scoping a WoT Thing Description to the requesting user’s policy permissions

- Encryption key rotation for connectivity service secrets, including DevOps-triggered re-encryption of stored credentials

- X509 client-certificate authentication to MongoDB, with a configurable CA root certificate for the TLS connection

empty()RQL filter to match absent or empty fields in search and event filtersfn:format()placeholder pipeline function for correlated field extraction from JSON arrays- Slow search query logging with configurable threshold to identify expensive queries

- Configurable custom MongoDB search indexes for tuning Ditto search to specific workloads

- Per-namespace activity-check configuration to vary entity passivation timeouts per namespace

- Live entities Prometheus metric per namespace and entity type

- OpenID Connect prerequisite-conditions for early JWT rejection (e.g. audience validation)

- Local/relative

tm:refreferences in WoT ThingModel resolution ditto:deprecationNoticeWoT extension term to mark deprecated properties, actions and events- “Time Travel” mode in the Explorer UI to inspect a Thing’s state at any past revision or timestamp, alongside live and historical event browsing

The following non-functional work is also included:

- Building and running Ditto with Java 25

- Optimizing the

MongoReadJournalaggregation pipelines and theThingEventEnricherhot path - JFR-guided CPU optimisations in the things, things-search, gateway and connectivity services

- Stackless 4xx exceptions (feature-toggled) to eliminate stack-capture overhead on flow-control errors

- Configurable SSE publisher backpressure buffer size to suppress noisy backpressure WARN logs from slow SSE consumers

- Comprehensive JavaDoc for the public WoT model interfaces

- Helm chart bumped to

4.0.0with the bundledingress-nginxcontroller removed — operators provide their own ingress controller; the chart now uses its own semantic version, decoupled from Ditto’sappVersion - Updating dependencies to their latest versions

- Providing additional configuration options to Helm values

The following notable fixes are included:

- Surfacing enforcement and validation errors for fire-and-forget commands instead of silently swallowing them

- Fixing

checkPermissionsignoring permissions inherited from imported policies - Fixing partial-access SSE event filtering for subscribers with multiple authorization subjects

- Fixing a MongoDB aggregation pipeline performance regression affecting

connections_journalreads - Fixing a Kafka consumer crash loop triggered by messages with blank header values

- Fixing a Fluency thread leak in the connection logger publisher

- Fixing subscription handling for multiple topics combined with extra fields in connectivity outbound mapping

- Redacting sensitive header values in

DittoHeaders.toString()to prevent accidental log leaks - Converting transient enforcement

AskTimeoutExceptionto HTTP 503 instead of 500 during rolling restarts, so clients see a retryable error - Fixing

ssl-confignot being picked up for self-signed certificates against the OpenID Connect issuer - Closing a shadowing vulnerability in namespace-policies by routing namespace-policy entries through rewritten labels

Please have a look at the 3.9.0 release notes for more detailed information on the release.

Artifacts

The new Java artifacts have been published at the Eclipse Maven repository as well as Maven central.

The Ditto JavaScript client release was published on npmjs.com:

The Docker images have been pushed to Docker Hub:

- eclipse/ditto-policies

- eclipse/ditto-things

- eclipse/ditto-things-search

- eclipse/ditto-gateway

- eclipse/ditto-connectivity

The Ditto Helm chart has been published to Docker Hub:

–

The Eclipse Ditto team

Helm chart now ingress controller agnostic

May 13, 2026 12:00 AM

Starting with Helm chart version 4.0.0, Eclipse Ditto’s Kubernetes deployment is no longer tied to a specific ingress controller. The chart now provides clean, standard Kubernetes Ingress resources that work with any ingress controller — whether you use ingress-nginx, Traefik, HAProxy, Kong, or any other implementation.

What changed

Previously, the Ditto Helm chart bundled an entire ingress-nginx controller deployment (854 lines of RBAC,

Deployments, Services, admission webhooks, and IngressClass) and hardcoded nginx.ingress.kubernetes.io/* annotations

across all Ingress resource templates. This forced users to use ingress-nginx and prevented adoption of other ingress

controllers.

In version 4.0.0, we made the following changes:

- Removed the bundled ingress-nginx controller deployment — users bring their own ingress controller

- Removed all nginx-specific annotations from Ingress resources — annotations are now empty by default

- Simplified path types to standard

PrefixandExact— no more controller-specific regex paths - Added per-group

enabledflags — each route group (api, ws, ui, devops) can be independently toggled - Replaced the

backendSuffixpattern with explicitservice.namereferences

Breaking changes

This is a major version bump (3.x → 4.0.0) with breaking changes to values.yaml:

ingress.controller.*— entire block removed (no longer deploying a controller)ingress.annotations— global nginx-specific annotations removedingress.api.kubernetesAuthAnnotations— removedingress.className— default changed from"nginx"to""(empty)ingress.host— default changed from"localhost"to""(catch-all)backendSuffix— replaced byservice.namein each path entry- All per-group nginx-specific annotation blocks — removed

New values.yaml structure

The ingress section is now clean and controller-agnostic:

ingress:

enabled: false

className: "" # Set to your controller's IngressClass

host: "" # Optional hostname — if empty, no host rule (catch-all)

tls: []

api:

enabled: true

annotations: {} # Add your controller-specific annotations here

paths:

- path: /api

pathType: Prefix

service:

name: gateway

- path: /stats

pathType: Prefix

service:

name: gateway

- path: /overall

pathType: Prefix

service:

name: gateway

ws:

enabled: true

annotations: {}

paths:

- path: /ws

pathType: Prefix

service:

name: gateway

ui:

enabled: true

annotations: {}

# defaultBackend serves as the catch-all for unmatched paths (e.g. the landing page)

defaultBackend:

service:

name: nginx

paths:

- path: /

pathType: Exact

service:

name: nginx

- path: /apidoc

pathType: Prefix

service:

name: swaggerui

- path: /ui

pathType: Prefix

service:

name: dittoui

devops:

enabled: true

annotations: {}

paths:

- path: /devops

pathType: Prefix

service:

name: gateway

- path: /health

pathType: Prefix

service:

name: gateway

- path: /status

pathType: Prefix

service:

name: gateway

- path: /api/2/connections

pathType: Prefix

service:

name: gateway

Deployment modes

The chart supports three deployment modes:

Standalone nginx pod (default, unchanged)

The standalone nginx reverse proxy remains the default for local and development setups. No ingress controller needed — access Ditto via port-forward:

helm install ditto ditto/ditto

kubectl port-forward svc/ditto-nginx 8080:8080

Ingress with nginx pod

Use Ingress resources for external access while keeping the nginx pod for the landing page and static resources:

helm install ditto ditto/ditto \

--set ingress.enabled=true \

--set ingress.className=nginx \

--set ingress.host=ditto.example.com

Ingress only (no nginx pod)

For production setups where the landing page is not needed, disable the nginx pod and the UI ingress’s root path:

helm install ditto ditto/ditto \

--set ingress.enabled=true \

--set ingress.className=nginx \

--set ingress.host=ditto.example.com \

--set nginx.enabled=false

Controller-specific configuration

Since controller-specific behavior (authentication, CORS, timeouts, URL rewriting) is now the user’s responsibility, here are examples for common ingress controllers.

ingress-nginx

For the Ditto UI and Swagger API docs, ingress-nginx requires regex paths and a rewrite annotation to strip the path prefix before forwarding to the backend.

Note that when use-regex is enabled, the root path / must be specified as /$ with pathType: ImplementationSpecific

to ensure it matches only the exact root path and does not conflict with the ingress controller’s default catch-all:

ingress:

enabled: true

className: nginx

host: ditto.example.com

api:

annotations:

nginx.ingress.kubernetes.io/proxy-read-timeout: "70"

nginx.ingress.kubernetes.io/proxy-send-timeout: "70"

ws:

annotations:

nginx.ingress.kubernetes.io/proxy-read-timeout: "86400"

nginx.ingress.kubernetes.io/proxy-send-timeout: "86400"

nginx.ingress.kubernetes.io/proxy-buffering: "off"

ui:

annotations:

nginx.ingress.kubernetes.io/use-regex: "true"

nginx.ingress.kubernetes.io/rewrite-target: /$2

paths:

- path: /$

pathType: ImplementationSpecific

service:

name: nginx

- path: /apidoc(/|$)(.*)

pathType: ImplementationSpecific

service:

name: swaggerui

- path: /ui(/|$)(.*)

pathType: ImplementationSpecific

service:

name: dittoui

Traefik

Traefik requires a Middleware CRD to strip path prefixes. First, create the middleware in the Ditto namespace:

apiVersion: traefik.io/v1alpha1

kind: Middleware

metadata:

name: strip-prefix

namespace: ditto

spec:

stripPrefix:

prefixes:

- /ui

- /apidoc

Then reference it in the ingress configuration:

ingress:

enabled: true

className: traefik

host: ditto.example.com

ui:

annotations:

traefik.ingress.kubernetes.io/router.middlewares: ditto-strip-prefix@kubernetescrd

HAProxy

ingress:

enabled: true

className: haproxy

host: ditto.example.com

ui:

annotations:

haproxy.org/path-rewrite: "/ /"

What stays the same

- The standalone nginx pod for local/development deployments is unchanged

- The OpenShift Route template is unchanged

- All Ditto service templates (gateway, things, policies, connectivity, etc.) are unchanged

- The 4 route groups (api, ws, ui, devops) cover all Ditto endpoints — the root landing page is now part of the ui group

Migrating from 3.x

- Install your ingress controller separately (e.g., via its own Helm chart)

- Update your values.yaml to match the new structure — replace

backendSuffixwithservice.name, removeingress.controller.*and global annotations - Add controller-specific annotations to the appropriate route groups

- Upgrade the chart to version 4.0.0

–

The Eclipse Ditto team

May 03, 2026

What if Java Consistently Used Intention Revealing Names for Inner Classes?

by Donald Raab at May 03, 2026 05:41 AM

The pain of reading and understanding a class without a name.

Photo by Brett Jordan on Unsplash

Photo by Brett Jordan on UnsplashJmap and JOL are your friends

I’ve been teaching developers how to use both jmap and Java Object Layout (JOL) to understand the cost of memory for types in Java for quite a while now. In the case of jmap, I have been teaching how to use this very useful command line tool for over two decades. JOL I learned about a few years ago. I believe it has been around as a tool since late 2013. JOL is like a microscope (you can look at a single instance or class), and jmap is alike a full body x-ray image (you see all the instances on your heap). Java developers should learn and keep both tools handy to understand the impact of implementation decisions on their Java heaps.

JOL and jmap can help you understand what is taking up space on your Java heap. How well they can do this is dependent on the names of the types provided by the libraries you use. If the names of your types are not explicit and instead are determined by anonymous inner classes, you’re going to have to go digging through source code to figure out what is meant by someClass$1, someClass$2, someClass$3, etc.

When your friends can’t help you

Java Collections and Stream could be more helpful to developers by using explicit names for inner classes instead of relying on anonymous inner classes. Using anonymous inner classes pushes the pain of discovering the location of classes on the developer. I encountered this pain this evening. I stared at output from JOL wondering “What is java.util.Collections$2?” I had never seen this type before. Do you know what it is? I certainly didn’t. Now I do. But I will forget what it means, and I can’t easily look it up, so I am writing it down here.

Do you know what java.util.stream.ReferencePipeline$2 is? What about java.util.stream.ReferencePipeline$3? What about java.util.stream.ReferencePipeline$5? What about java.util.stream.ReferencePipeline$Head?

Ok, to be fair, the last one has an explicit name (java.util.stream.ReferencePipeline$Head), but my guess is that most Java developers will probably have no idea what this type is or what it is used for. Many Java developers may not know what the type ReferencePipeline is used for in Java. If you already know it is THE implementation of Stream in the JDK, well done!

But what is java.util.Collections$2 and where does it come from?

I’m going to share two parameterized test methods I ran an experiment with along with their JOL output results, and see if you can guess what the types in the JOL output are. If you can’t figure out what java.util.Collections$2 is on your own, you can find out later in this blog.

In order to run the code examples in this blog, I used Java 25 with Compact Object Headers (COH) enabled and Java Object Layout (JOL) 0.17. The copyable text JVM setting for COH and latest Maven dependency info for JOL can be found in the following blog.

Some Benefits of Enabling Compact Object Headers in Java 25 for Streams

Setting to enable Compact Object Headers on Java 25

Setting to enable Compact Object Headers on Java 25 Latest Maven dependency Info for Java Object Layout (JOL)

Latest Maven dependency Info for Java Object Layout (JOL)Can you figure out the JDK types using JOL?

I have written a parameterized JUnit test that will use List instances from size 0 to 9, containing String representations of the indexes (e.g. List.of(), List.of(“1”), List.of(“1”, “2”), etc. I am using the following code from JOL’s GraphLayout class to output the footprint of a single object.

GraphLayout.parseInstance(mapToLong).toFootprint()

Here’s the source code I used for the parameterized test:

@ParameterizedTest

@MethodSource("jdkListProvider")

public void javaTypesForImmutableListAndStream(List<String> list)

{

var stream = list.stream();

var filter = stream.filter(i -> true);

var map = filter.map(Object::toString);

var mapToLong = map.mapToLong(String::length);

IO.println(GraphLayout.parseInstance(mapToLong).toFootprint());

assertTrue(mapToLong.allMatch(each -> each == 1));

IO.println("** stream = " + stream.getClass().getTypeName());

IO.println("** filter = " + filter.getClass().getTypeName());

IO.println("** map = " + map.getClass().getTypeName());

IO.println("** mapToLong = " + mapToLong.getClass().getTypeName());

IO.println("** Size of -> " + list.getClass().getSimpleName() + " = " + list.size());

IO.println("=======================");

}

static Stream<Arguments> jdkListProvider()

{

// Returns a Stream of Arguments containing List.of() from size 0 to 9

// e.g. List.of(), List.of("1"), List.of("1", "2"), etc.

var list = Stream.concat(

Stream.of(Arguments.of(List.of())),

IntStream.range(2, 11)

.mapToObj(i -> IntStream.range(1, i))

.map(s -> s.mapToObj(Integer::toString))

.map(Stream::toList)

.map(List::copyOf)

.map(Arguments::of))

.collect(Collectors.toList());

Collections.shuffle(list);

return list.stream();

}

The output will be different each time, since I am shuffling the order of the List instances to make it harder to guess the size of a List you are looking at in the JOL output. Here is the output for one run. I show the JOL footprint for mapToLong which will wind up having references to everything that came before in its object graph. I made it easier to figure out the details of each run at the end, because it would be possibly too challenging and infuriating otherwise.

// JDK Types Run 1

java.util.stream.ReferencePipeline$5@53045c6cd footprint:

COUNT AVG SUM DESCRIPTION

5 16 80 [B

1 32 32 [Ljava.lang.Object;

5 24 120 java.lang.String

1 32 32 java.util.AbstractList$RandomAccessSpliterator

1 16 16 java.util.ImmutableCollections$ListN

1 56 56 java.util.stream.ReferencePipeline$2

1 56 56 java.util.stream.ReferencePipeline$3

1 56 56 java.util.stream.ReferencePipeline$5

1 48 48 java.util.stream.ReferencePipeline$Head

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001273c00

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276000

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276400

20 520 (total)

** stream = java.util.stream.ReferencePipeline$Head

** filter = java.util.stream.ReferencePipeline$2

** map = java.util.stream.ReferencePipeline$3

** mapToLong = java.util.stream.ReferencePipeline$5

** Size of -> ListN = 5

// JDK Types Run 2

java.util.stream.ReferencePipeline$5@1e097d59d footprint:

COUNT AVG SUM DESCRIPTION

7 16 112 [B

1 40 40 [Ljava.lang.Object;

7 24 168 java.lang.String

1 32 32 java.util.AbstractList$RandomAccessSpliterator

1 16 16 java.util.ImmutableCollections$ListN

1 56 56 java.util.stream.ReferencePipeline$2

1 56 56 java.util.stream.ReferencePipeline$3

1 56 56 java.util.stream.ReferencePipeline$5

1 48 48 java.util.stream.ReferencePipeline$Head

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001273c00

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276000

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276400

24 608 (total)

** stream = java.util.stream.ReferencePipeline$Head

** filter = java.util.stream.ReferencePipeline$2

** map = java.util.stream.ReferencePipeline$3

** mapToLong = java.util.stream.ReferencePipeline$5

** Size of -> ListN = 7

// JDK Types Run 3

java.util.stream.ReferencePipeline$5@e57b96dd footprint:

COUNT AVG SUM DESCRIPTION

9 16 144 [B

1 48 48 [Ljava.lang.Object;

9 24 216 java.lang.String

1 32 32 java.util.AbstractList$RandomAccessSpliterator

1 16 16 java.util.ImmutableCollections$ListN

1 56 56 java.util.stream.ReferencePipeline$2

1 56 56 java.util.stream.ReferencePipeline$3

1 56 56 java.util.stream.ReferencePipeline$5

1 48 48 java.util.stream.ReferencePipeline$Head

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001273c00

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276000

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276400

28 696 (total)

** stream = java.util.stream.ReferencePipeline$Head

** filter = java.util.stream.ReferencePipeline$2

** map = java.util.stream.ReferencePipeline$3

** mapToLong = java.util.stream.ReferencePipeline$5

** Size of -> ListN = 9

// JDK Types Run 4

java.util.stream.ReferencePipeline$5@3d3e5463d footprint:

COUNT AVG SUM DESCRIPTION

2 16 32 [B

2 24 48 java.lang.String

1 32 32 java.util.AbstractList$RandomAccessSpliterator

1 16 16 java.util.ImmutableCollections$List12

1 56 56 java.util.stream.ReferencePipeline$2

1 56 56 java.util.stream.ReferencePipeline$3

1 56 56 java.util.stream.ReferencePipeline$5

1 48 48 java.util.stream.ReferencePipeline$Head

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001273c00

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276000

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276400

13 368 (total)

** stream = java.util.stream.ReferencePipeline$Head

** filter = java.util.stream.ReferencePipeline$2

** map = java.util.stream.ReferencePipeline$3

** mapToLong = java.util.stream.ReferencePipeline$5

** Size of -> List12 = 2

// JDK Types Run 5

java.util.stream.ReferencePipeline$5@31c7528fd footprint:

COUNT AVG SUM DESCRIPTION

1 16 16 [B

1 24 24 java.lang.String

1 24 24 java.util.Collections$2

1 56 56 java.util.stream.ReferencePipeline$2

1 56 56 java.util.stream.ReferencePipeline$3

1 56 56 java.util.stream.ReferencePipeline$5

1 48 48 java.util.stream.ReferencePipeline$Head

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001273c00

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276000

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276400

10 304 (total)

** stream = java.util.stream.ReferencePipeline$Head

** filter = java.util.stream.ReferencePipeline$2

** map = java.util.stream.ReferencePipeline$3

** mapToLong = java.util.stream.ReferencePipeline$5

** Size of -> List12 = 1

// JDK Types Run 6

java.util.stream.ReferencePipeline$5@1b84f475d footprint:

COUNT AVG SUM DESCRIPTION

3 16 48 [B

1 24 24 [Ljava.lang.Object;

3 24 72 java.lang.String

1 32 32 java.util.AbstractList$RandomAccessSpliterator

1 16 16 java.util.ImmutableCollections$ListN

1 56 56 java.util.stream.ReferencePipeline$2

1 56 56 java.util.stream.ReferencePipeline$3

1 56 56 java.util.stream.ReferencePipeline$5

1 48 48 java.util.stream.ReferencePipeline$Head

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001273c00

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276000

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276400

16 432 (total)

** stream = java.util.stream.ReferencePipeline$Head

** filter = java.util.stream.ReferencePipeline$2

** map = java.util.stream.ReferencePipeline$3

** mapToLong = java.util.stream.ReferencePipeline$5

** Size of -> ListN = 3

// JDK Types Run 7

java.util.stream.ReferencePipeline$5@5812f68bd footprint:

COUNT AVG SUM DESCRIPTION

6 16 96 [B

1 40 40 [Ljava.lang.Object;

6 24 144 java.lang.String

1 32 32 java.util.AbstractList$RandomAccessSpliterator

1 16 16 java.util.ImmutableCollections$ListN

1 56 56 java.util.stream.ReferencePipeline$2

1 56 56 java.util.stream.ReferencePipeline$3

1 56 56 java.util.stream.ReferencePipeline$5

1 48 48 java.util.stream.ReferencePipeline$Head

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001273c00

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276000

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276400

22 568 (total)

** stream = java.util.stream.ReferencePipeline$Head

** filter = java.util.stream.ReferencePipeline$2

** map = java.util.stream.ReferencePipeline$3

** mapToLong = java.util.stream.ReferencePipeline$5

** Size of -> ListN = 6

// JDK Types Run 8

java.util.stream.ReferencePipeline$5@6d025197d footprint:

COUNT AVG SUM DESCRIPTION

4 16 64 [B

1 32 32 [Ljava.lang.Object;

4 24 96 java.lang.String

1 32 32 java.util.AbstractList$RandomAccessSpliterator

1 16 16 java.util.ImmutableCollections$ListN

1 56 56 java.util.stream.ReferencePipeline$2

1 56 56 java.util.stream.ReferencePipeline$3

1 56 56 java.util.stream.ReferencePipeline$5

1 48 48 java.util.stream.ReferencePipeline$Head

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001273c00

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276000

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276400

18 480 (total)

** stream = java.util.stream.ReferencePipeline$Head

** filter = java.util.stream.ReferencePipeline$2

** map = java.util.stream.ReferencePipeline$3

** mapToLong = java.util.stream.ReferencePipeline$5

** Size of -> ListN = 4

// JDK Types Run 9

java.util.stream.ReferencePipeline$5@3a4621bdd footprint:

COUNT AVG SUM DESCRIPTION

8 16 128 [B

1 48 48 [Ljava.lang.Object;

8 24 192 java.lang.String

1 32 32 java.util.AbstractList$RandomAccessSpliterator

1 16 16 java.util.ImmutableCollections$ListN

1 56 56 java.util.stream.ReferencePipeline$2

1 56 56 java.util.stream.ReferencePipeline$3

1 56 56 java.util.stream.ReferencePipeline$5

1 48 48 java.util.stream.ReferencePipeline$Head

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001273c00

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276000

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276400

26 656 (total)

** stream = java.util.stream.ReferencePipeline$Head

** filter = java.util.stream.ReferencePipeline$2

** map = java.util.stream.ReferencePipeline$3

** mapToLong = java.util.stream.ReferencePipeline$5

** Size of -> ListN = 8

// JDK Types Run 10

java.util.stream.ReferencePipeline$5@4f9a2c08d footprint:

COUNT AVG SUM DESCRIPTION

1 16 16 [Ljava.lang.Object;

1 32 32 java.util.AbstractList$RandomAccessSpliterator

1 16 16 java.util.ImmutableCollections$ListN

1 56 56 java.util.stream.ReferencePipeline$2

1 56 56 java.util.stream.ReferencePipeline$3

1 56 56 java.util.stream.ReferencePipeline$5

1 48 48 java.util.stream.ReferencePipeline$Head

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001273c00

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276000

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x0000100001276400

10 304 (total)

** stream = java.util.stream.ReferencePipeline$Head

** filter = java.util.stream.ReferencePipeline$2

** map = java.util.stream.ReferencePipeline$3

** mapToLong = java.util.stream.ReferencePipeline$5

** Size of -> ListN = 0

This is the order of the List parameters for this run according to the JUnit runner in IntelliJ.

The order of parameterized runs in JUnit for JDK Types

The order of parameterized runs in JUnit for JDK TypesHere’s a link to the source code in a project in GitHub.

Can you figure out the Eclipse Collection types using JOL?

I have written a parameterized JUnit test that will use ImmutableList instances from size 0 to 9, containing String representations of the indexes (e.g. Lists.immutable.of(), Lists.immutable.of(“1”), Lists.immutable.of(“1”, “2”), etc. I am using the following code from JOL’s GraphLayout class to output the footprint of a single object.

GraphLayout.parseInstance(collectLong).toFootprint()

Here’s the source code I used for the parameterized test:

@ParameterizedTest

@MethodSource("ecImmutableListProvider")

public void eclipseCollectionTypesForImmutableListAndLazy(ImmutableList<String> list)

{

var lazy = list.asLazy();

var select = lazy.select(i -> true);

var collect = select.collect(Object::toString);

var collectLong = collect.collectLong(String::length);

IO.println(GraphLayout.parseInstance(collectLong).toFootprint());

assertTrue(collectLong.allSatisfy(each -> each == 1));

}

static Stream<Arguments> ecImmutableListProvider()

{

// Returns a Stream of Arguments containing Lists.immutable.of() from size 0 to 9

// e.g. Lists.immutable.of(), Lists.immutable.of("1"), Lists.immutable.of("1", "2"), etc.

return Lists.mutable.of(Arguments.of(Lists.immutable.of()))

.withAll(

IntInterval.oneTo(9)

.collect(IntInterval::oneTo)

.collect(v -> v.collect(Integer::toString))

.collect(Arguments::of))

.shuffleThis()

.stream();

}

I removed all of the helpful outputs that I had to use for JDK, to see if you can figure out the Eclipse Collections types and List size with only the JOL output to help you figure things out.

// Eclipse Collections Types Run 1

org.eclipse.collections.impl.lazy.primitive.CollectLongIterable@4bff7da0d footprint:

COUNT AVG SUM DESCRIPTION

9 16 144 [B

9 24 216 java.lang.String

1 16 16 org.eclipse.collections.impl.lazy.CollectIterable

1 16 16 org.eclipse.collections.impl.lazy.SelectIterable

1 24 24 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable

1 16 16 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable$LongFunctionToProcedure

1 48 48 org.eclipse.collections.impl.list.immutable.ImmutableNonupletonList

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012ab400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b1400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b2c00

26 504 (total)

// Eclipse Collections Types Run 2

org.eclipse.collections.impl.lazy.primitive.CollectLongIterable@39a8312fd footprint:

COUNT AVG SUM DESCRIPTION

8 16 128 [B

8 24 192 java.lang.String

1 16 16 org.eclipse.collections.impl.lazy.CollectIterable

1 16 16 org.eclipse.collections.impl.lazy.SelectIterable

1 24 24 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable

1 16 16 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable$LongFunctionToProcedure

1 40 40 org.eclipse.collections.impl.list.immutable.ImmutableOctupletonList

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012ab400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b1400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b2c00

24 456 (total)

// Eclipse Collections Types Run 3

org.eclipse.collections.impl.lazy.primitive.CollectLongIterable@674bd420d footprint:

COUNT AVG SUM DESCRIPTION

1 16 16 [B

1 24 24 java.lang.String

1 16 16 org.eclipse.collections.impl.lazy.CollectIterable

1 16 16 org.eclipse.collections.impl.lazy.SelectIterable

1 24 24 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable

1 16 16 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable$LongFunctionToProcedure

1 16 16 org.eclipse.collections.impl.list.immutable.ImmutableSingletonList

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012ab400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b1400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b2c00

10 152 (total)

// Eclipse Collections Types Run 4

org.eclipse.collections.impl.lazy.primitive.CollectLongIterable@70a36a66d footprint:

COUNT AVG SUM DESCRIPTION

4 16 64 [B

4 24 96 java.lang.String

1 16 16 org.eclipse.collections.impl.lazy.CollectIterable

1 16 16 org.eclipse.collections.impl.lazy.SelectIterable

1 24 24 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable

1 16 16 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable$LongFunctionToProcedure

1 24 24 org.eclipse.collections.impl.list.immutable.ImmutableQuadrupletonList

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012ab400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b1400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b2c00

16 280 (total)

// Eclipse Collections Types Run 5

org.eclipse.collections.impl.lazy.primitive.CollectLongIterable@1d131e1bd footprint:

COUNT AVG SUM DESCRIPTION

7 16 112 [B

7 24 168 java.lang.String

1 16 16 org.eclipse.collections.impl.lazy.CollectIterable

1 16 16 org.eclipse.collections.impl.lazy.SelectIterable

1 24 24 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable

1 16 16 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable$LongFunctionToProcedure

1 40 40 org.eclipse.collections.impl.list.immutable.ImmutableSeptupletonList

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012ab400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b1400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b2c00

22 416 (total)

// Eclipse Collections Types Run 6

org.eclipse.collections.impl.lazy.primitive.CollectLongIterable@56303b57d footprint:

COUNT AVG SUM DESCRIPTION

6 16 96 [B

6 24 144 java.lang.String

1 16 16 org.eclipse.collections.impl.lazy.CollectIterable

1 16 16 org.eclipse.collections.impl.lazy.SelectIterable

1 24 24 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable

1 16 16 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable$LongFunctionToProcedure

1 32 32 org.eclipse.collections.impl.list.immutable.ImmutableSextupletonList

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012ab400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b1400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b2c00

20 368 (total)

// Eclipse Collections Types Run 7

org.eclipse.collections.impl.lazy.primitive.CollectLongIterable@3724af13d footprint:

COUNT AVG SUM DESCRIPTION

5 16 80 [B

5 24 120 java.lang.String

1 16 16 org.eclipse.collections.impl.lazy.CollectIterable

1 16 16 org.eclipse.collections.impl.lazy.SelectIterable

1 24 24 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable

1 16 16 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable$LongFunctionToProcedure

1 32 32 org.eclipse.collections.impl.list.immutable.ImmutableQuintupletonList

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012ab400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b1400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b2c00

18 328 (total)

// Eclipse Collections Types Run 8

org.eclipse.collections.impl.lazy.primitive.CollectLongIterable@7e7b159bd footprint:

COUNT AVG SUM DESCRIPTION

2 16 32 [B

2 24 48 java.lang.String

1 16 16 org.eclipse.collections.impl.lazy.CollectIterable

1 16 16 org.eclipse.collections.impl.lazy.SelectIterable

1 24 24 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable

1 16 16 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable$LongFunctionToProcedure

1 16 16 org.eclipse.collections.impl.list.immutable.ImmutableDoubletonList

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012ab400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b1400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b2c00

12 192 (total)

// Eclipse Collections Types Run 9

org.eclipse.collections.impl.lazy.primitive.CollectLongIterable@40dff0b7d footprint:

COUNT AVG SUM DESCRIPTION

3 16 48 [B

3 24 72 java.lang.String

1 16 16 org.eclipse.collections.impl.lazy.CollectIterable

1 16 16 org.eclipse.collections.impl.lazy.SelectIterable

1 24 24 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable

1 16 16 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable$LongFunctionToProcedure

1 24 24 org.eclipse.collections.impl.list.immutable.ImmutableTripletonList

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012ab400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b1400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b2c00

14 240 (total)

// Eclipse Collections Types Run 10

org.eclipse.collections.impl.lazy.primitive.CollectLongIterable@7e8dcdaad footprint:

COUNT AVG SUM DESCRIPTION

1 16 16 org.eclipse.collections.impl.lazy.CollectIterable

1 16 16 org.eclipse.collections.impl.lazy.SelectIterable

1 24 24 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable

1 16 16 org.eclipse.collections.impl.lazy.primitive.CollectLongIterable$LongFunctionToProcedure

1 8 8 org.eclipse.collections.impl.list.immutable.ImmutableEmptyList

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012ab400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b1400

1 8 8 refactortoec.generation.StreamLazyIterableMemoryTest$$Lambda/0x000007f0012b2c00

8 104 (total)

This is the order of the List parameters for this run according to the JUnit runner in IntelliJ.

The order of parameterized runs in JUnit for Eclipse Collections Types

The order of parameterized runs in JUnit for Eclipse Collections TypesHere’s a link to the source code in GitHub.

What is java.util.Collections$2 in the JDK output?

Nothing in the sample code I provided can help us discover what java.util.Collections$2 represents directly. There is a hint, if we look at the size that this type shows up in, which is size 1. The type of List for this size is List12. Do you pronounce this type as List Twelve, or List One or Two? I think ListOneOrTwo might have been a more intention revealing name. At least I wouldn’t have read it as “List Twelve” the first time I saw this type in JOL or jmap output.

Now we have to look on the type List12 for something special about size one. Found it.

The spliterator implementation in List12

The spliterator implementation in List12Now if you’re wondering why Collections.singletonSpliterator(e0) results in a type named java.util.Collections$2, welcome to the club.

The beginning of the anonymous inner class returned by Collections.singletonSpliterator()

The beginning of the anonymous inner class returned by Collections.singletonSpliterator()This doesn’t immediately explain why the List12 instance has mysteriously disappeared from the JOL output. We can reason about why this happens from the code in the spliterator() method above. A Stream will hold onto a Spliterator. A Spliterator like RandomAccessSpliterator will hold onto the List it is for as seen below.

RandomAccessSpliterator keeps a reference to the List it is for

RandomAccessSpliterator keeps a reference to the List it is forThe Collections.singletonSpliterator() instead holds onto the single element, which in this particular case was the String -> “1”. We don’t see a variable declaration inside of the anonymous inner class above, but if we look a little farther down the code, we will see there is a capture of the element reference in the anonymous inner class, which has the effect of creating a field in the type.

See the parameter element captured in the tryAdvance method, where is show as italicized

See the parameter element captured in the tryAdvance method, where is show as italicizedI would propose a more helpful name for the anonymous inner class returned by singletonSpliterator could be SingletonSpliterator.

Why don’t some of these types have names?

I don’t know the answer to this, but I hope it helps to ask. There are reasons why there are only List12 and ListN types in the JDK, and it has to do with megamorphism impacts on performance. I wrote a bit about this in the following blog.

Sweating the small stuff in Java

This doesn’t help explain why there are not named types inside of ReferencePipeline for filter, map, mapToLong and other kind of pipeline types. We can see all of the anonymous types more easily by looking at all the subtypes of ReferencePipeline.

ReferencePipeline subtypes

ReferencePipeline subtypesIf we go up one level to the Stream interface, this is what the hierarchy looks like.

Stream subtypes

Stream subtypesIf we compare this to LazyIterable subtypes in Eclipse Collections, we can see that there are no anonymous LazyIterable types in the Eclipse Collections library. There are many more LazyIterable types in Eclipse Collections than there are in the JDK for Stream, so I can’t just take a screenshot, but I can copy all the types to the clipboard. Notice that none of the types say “Anonymous.”

AbstractLazyIterable (org.eclipse.collections.impl.lazy)

ChunkBooleanIterable (org.eclipse.collections.impl.lazy.primitive)

ChunkByteIterable (org.eclipse.collections.impl.lazy.primitive)

ChunkCharIterable (org.eclipse.collections.impl.lazy.primitive)

ChunkDoubleIterable (org.eclipse.collections.impl.lazy.primitive)

ChunkFloatIterable (org.eclipse.collections.impl.lazy.primitive)

ChunkIntIterable (org.eclipse.collections.impl.lazy.primitive)

ChunkIterable (org.eclipse.collections.impl.lazy)

ChunkLongIterable (org.eclipse.collections.impl.lazy.primitive)

ChunkShortIterable (org.eclipse.collections.impl.lazy.primitive)

CollectBooleanToObjectIterable (org.eclipse.collections.impl.lazy.primitive)

CollectByteToObjectIterable (org.eclipse.collections.impl.lazy.primitive)

CollectCharToObjectIterable (org.eclipse.collections.impl.lazy.primitive)

CollectDoubleToObjectIterable (org.eclipse.collections.impl.lazy.primitive)

CollectFloatToObjectIterable (org.eclipse.collections.impl.lazy.primitive)

CollectIntToObjectIterable (org.eclipse.collections.impl.lazy.primitive)

CollectIterable (org.eclipse.collections.impl.lazy)

CollectLongToObjectIterable (org.eclipse.collections.impl.lazy.primitive)

CollectShortToObjectIterable (org.eclipse.collections.impl.lazy.primitive)

CompositeIterable (org.eclipse.collections.impl.lazy)

DistinctIterable (org.eclipse.collections.impl.lazy)

DropIterable (org.eclipse.collections.impl.lazy)

DropWhileIterable (org.eclipse.collections.impl.lazy)

FlatCollectBooleanToObjectIterable (org.eclipse.collections.impl.lazy.primitive)

FlatCollectByteToObjectIterable (org.eclipse.collections.impl.lazy.primitive)

FlatCollectCharToObjectIterable (org.eclipse.collections.impl.lazy.primitive)

FlatCollectDoubleToObjectIterable (org.eclipse.collections.impl.lazy.primitive)

FlatCollectFloatToObjectIterable (org.eclipse.collections.impl.lazy.primitive)

FlatCollectIntToObjectIterable (org.eclipse.collections.impl.lazy.primitive)

FlatCollectIterable (org.eclipse.collections.impl.lazy)

FlatCollectLongToObjectIterable (org.eclipse.collections.impl.lazy.primitive)

FlatCollectShortToObjectIterable (org.eclipse.collections.impl.lazy.primitive)

Interval (org.eclipse.collections.impl.list)

KeysView in ObjectBooleanHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeysView in ObjectBooleanHashMapWithHashingStrategy (org.eclipse.collections.impl.map.mutable.primitive)

KeysView in ObjectByteHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeysView in ObjectByteHashMapWithHashingStrategy (org.eclipse.collections.impl.map.mutable.primitive)

KeysView in ObjectCharHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeysView in ObjectCharHashMapWithHashingStrategy (org.eclipse.collections.impl.map.mutable.primitive)

KeysView in ObjectDoubleHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeysView in ObjectDoubleHashMapWithHashingStrategy (org.eclipse.collections.impl.map.mutable.primitive)

KeysView in ObjectFloatHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeysView in ObjectFloatHashMapWithHashingStrategy (org.eclipse.collections.impl.map.mutable.primitive)

KeysView in ObjectIntHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeysView in ObjectIntHashMapWithHashingStrategy (org.eclipse.collections.impl.map.mutable.primitive)

KeysView in ObjectLongHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeysView in ObjectLongHashMapWithHashingStrategy (org.eclipse.collections.impl.map.mutable.primitive)

KeysView in ObjectShortHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeysView in ObjectShortHashMapWithHashingStrategy (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in ByteBooleanHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in ByteByteHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in ByteCharHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in ByteDoubleHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in ByteFloatHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in ByteIntHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in ByteLongHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in ByteObjectHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in ByteShortHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in CharBooleanHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in CharByteHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in CharCharHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in CharDoubleHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in CharFloatHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in CharIntHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in CharLongHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in CharObjectHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in CharShortHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in DoubleBooleanHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in DoubleByteHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in DoubleCharHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in DoubleDoubleHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in DoubleFloatHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in DoubleIntHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in DoubleLongHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in DoubleObjectHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in DoubleShortHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in FloatBooleanHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in FloatByteHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in FloatCharHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in FloatDoubleHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in FloatFloatHashMap (org.eclipse.collections.impl.map.mutable.primitive)

KeyValuesView in FloatIntHashMap (org.eclipse.collections.impl.map.mutable.primitive)